The bottleneck nobody talks about: why product leaders ration their curiosity

What changed when the cost of a data question dropped from two weeks to two minutes

Product is a weird job. You’re accountable for outcomes, but you don’t manage the teams that deliver them. You sit across engineering, design, analytics, ops, marketing, and you need all of them moving in the same direction.

The way you earn that influence is by being useful. By being the person who consistently shows up with something nobody else had looked at, and saying “I dug into this” in a way that changes the conversation.

Backing up your hypothesis with insightful data is the best way to earn that trust. AI has transformed my workflow here more than anything else I’ve adopted — I went from waiting two weeks for an analyst to run a query to answering my own questions in minutes.

The bottleneck nobody talks about

At most large companies, the analytics cycle works like this: PM writes a question. Analyst interprets it (often differently than intended). Analyst writes a query. PM reviews the results. Results raise more questions. Another round trip. Two weeks later, you have a number you’re 60% confident in.

This isn’t the analyst’s fault. They’re good at their job. The problem is structural: every question costs a round trip, so you learn to ask fewer questions. You go into meetings with your best guess instead of an answer. You end up arguing from conviction when you’d rather be arguing from evidence.

When the cost of a question drops from two weeks to two minutes, you stop rationing your curiosity. You ask the follow-up. You check the adjacent thing. The questions themselves get better.

The first version failed for an interesting reason

My first attempt was straightforward: connect an AI to our Databricks warehouse, give it a data catalog in a YAML file, and let it write SQL. I built it in Cursor over a couple of days.

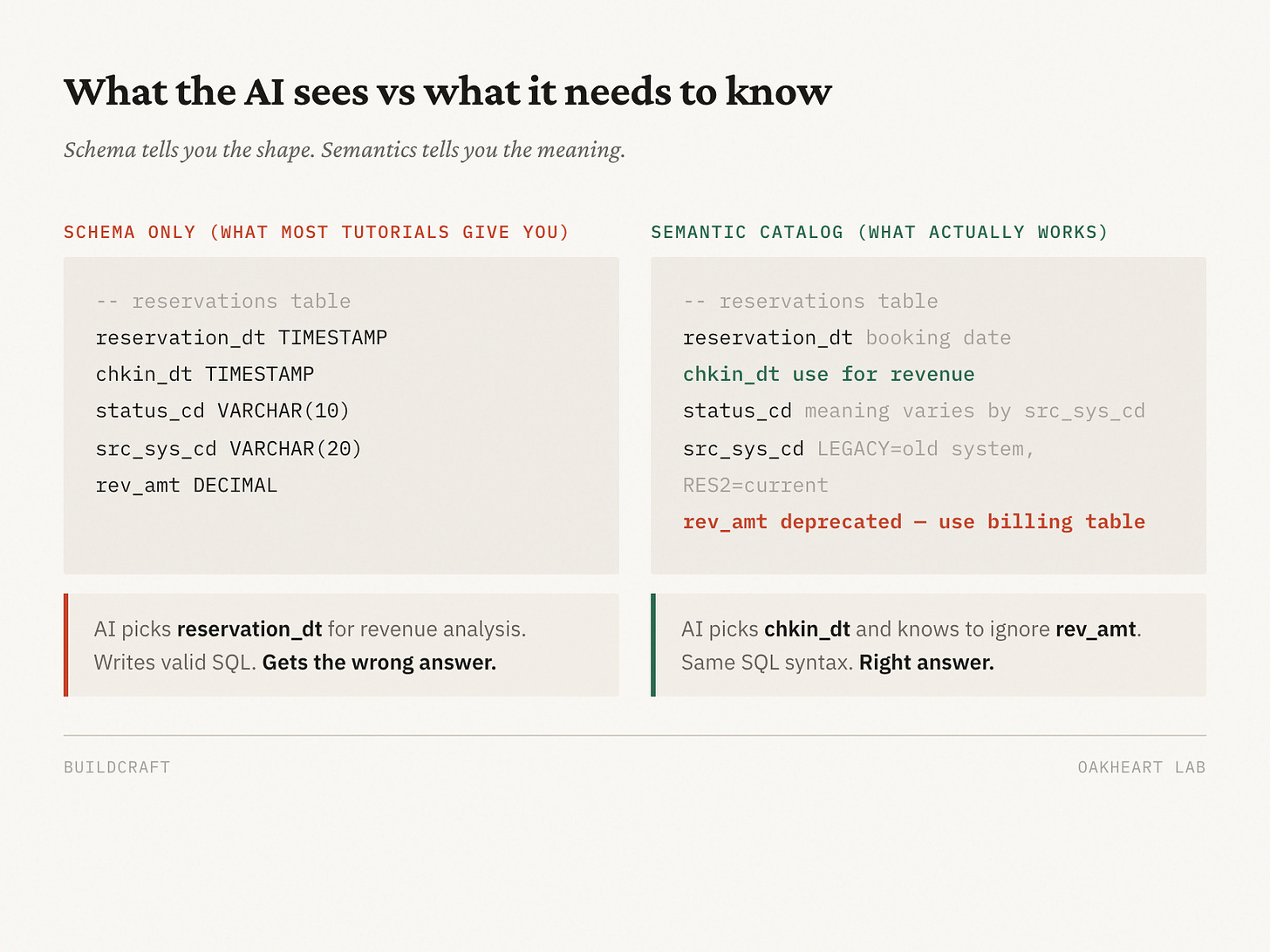

The problem surfaced immediately: the AI didn’t know what the data meant. It could write syntactically correct SQL, but it would confidently query the wrong columns and produce plausible answers that were just wrong. The data catalog I’d manually written was too thin. It described table names and column types but not the business logic, the gotchas, or the relationships between systems.

This is the failure mode that most “just connect AI to your database” tutorials skip entirely. The AI doesn’t know that chkin_dt is the field you use for revenue analysis, not reservation_dt. It doesn’t know that certain status codes mean different things depending on which system generated them. It doesn’t know that one table was deprecated six months ago but still has data flowing into it.

What actually worked

The fix was building a separate layer that discovers and documents the data automatically. An agent crawls the data warehouse, profiles tables, cross-references column usage with the actual codebase, and builds a catalog that captures semantics, not just schema. What the data means. How it’s used. What the known issues are.

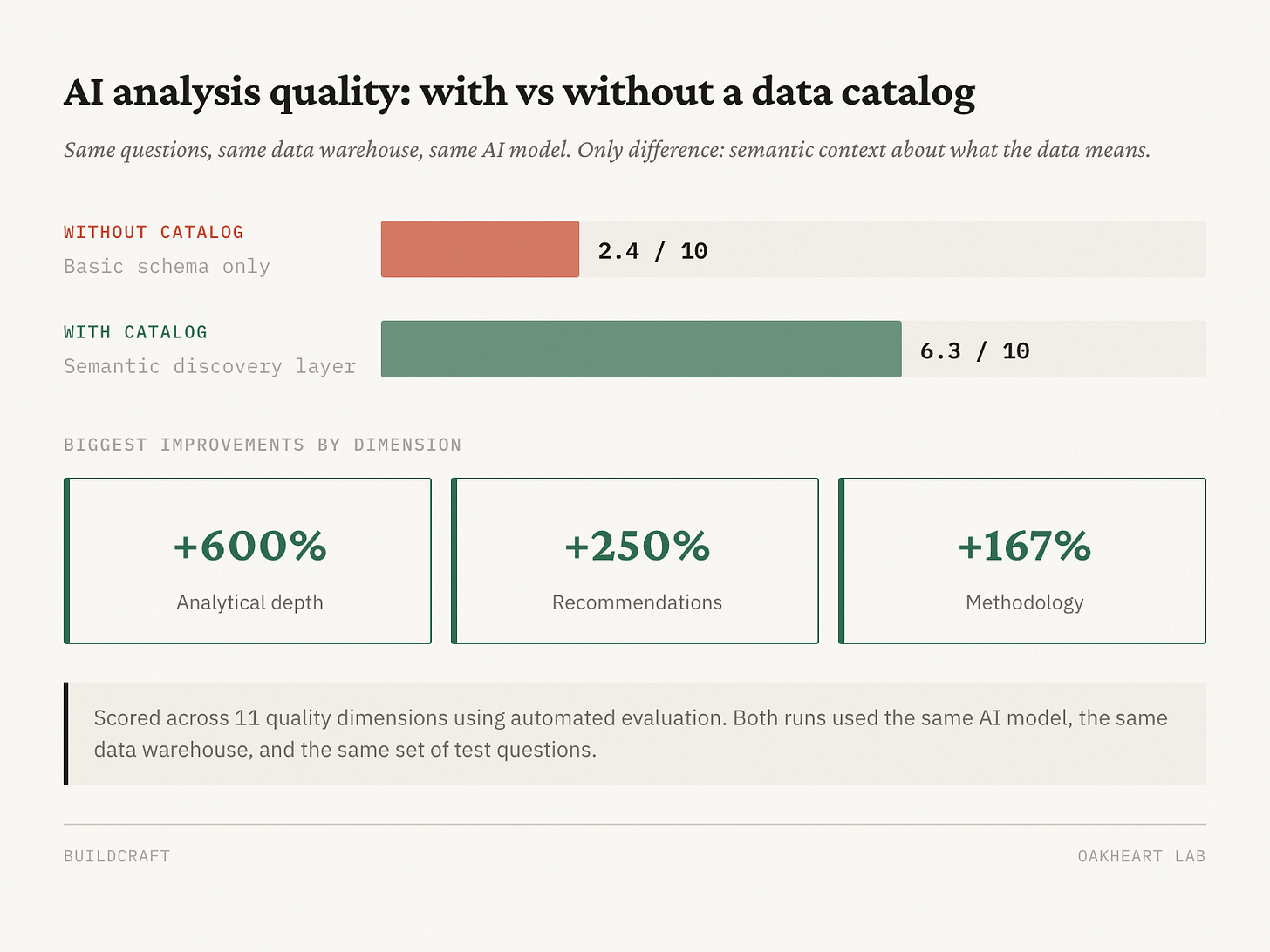

We tested this. AI analysis quality with just a basic connection scored 2.4 out of 10. With the discovery catalog layered in, the same questions scored 6.3 out of 10. Analytical depth jumped 600%. Methodology improved 167%. Recommendation quality went up 250%.

The catalog also learns. When someone runs an investigation and discovers something new about the data (a join that works, a field that’s misnamed, a table that’s more reliable than the documented alternative), that knowledge feeds back in.

6.3 isn’t perfect. The agent sometimes misses relevant catalog context that’s right there. Long investigations go sideways — it’ll chase a dead end when a human would have pivoted minutes ago. And it still presents results with more confidence than the data warrants, despite every guardrail I’ve put in place.

But 6.3 is usable. I can sit down with the AI, have an actual data exploration conversation, steer it when it drifts, and with some back-and-forth, surface insights that are genuinely interesting. That’s a different world from 2.4.

What changes when you can answer your own questions

I can now go from a half-formed business question to a trustworthy answer in minutes. But the speed is almost beside the point. What actually changed is that I stopped rationing.

Within the first few weeks, I’d found a customer care cost pattern that nobody had connected across systems. Separately, I noticed early return behavior pointing to a fixable issue in our policies. One investigation into rate policy surfaced a potential impact worth tens of millions of dollars — something that had been sitting in the data but never came up because nobody had asked the right sequence of questions.

None of these were questions anyone had thought to ask. They came from following one answer to the next question, the kind of unrationed curiosity that the old two-week cycle made impossible. When you can iterate in real time, you notice things that sequential analysis misses.

You don’t need to persuade people that your hunch is right when you can show them something they didn’t know. The data does the work for you.

The analysts benefit too. When I’m not consuming their bandwidth with basic queries, they do the deeper statistical work and modeling that actually requires their expertise.

How to start

You don’t need to build what I built.

Start by connecting AI to your warehouse. Most cloud data platforms (Databricks, Snowflake, BigQuery) have APIs or MCP connectors that let an AI agent run read-only queries. It’s read-only, so the risk is low. The worst case is a wrong answer, which is the same worst case you already have with human analysts.

Then write down what the AI gets wrong. The first time it confidently queries the wrong column, note which column it should have used and why. First time it misinterprets a status code, document the correct interpretation. That’s your data catalog. It doesn’t need to be a product. A markdown file works. You can get this far in a weekend.

After that, automate the discovery. An agent that profiles your tables, checks how columns are actually used in application code, and enriches the catalog without you writing every entry by hand. This is what took us from 2.4 to 6.3, and it’s where the investment starts paying off.

Good insight is viral inside a company. When you surface something that changes a decision, people notice. The more calls you can back with evidence, the more your cross-functional partners trust your judgment — not because you positioned yourself well, but because you were genuinely useful. And it starts with the simplest possible shift: stop rationing your curiosity.