Subagent-driven development: how to parallelize AI agent without blowing up your codebase

What a 69-file commit, a production 401, and an outdated spec taught me about parallel AI coding agents

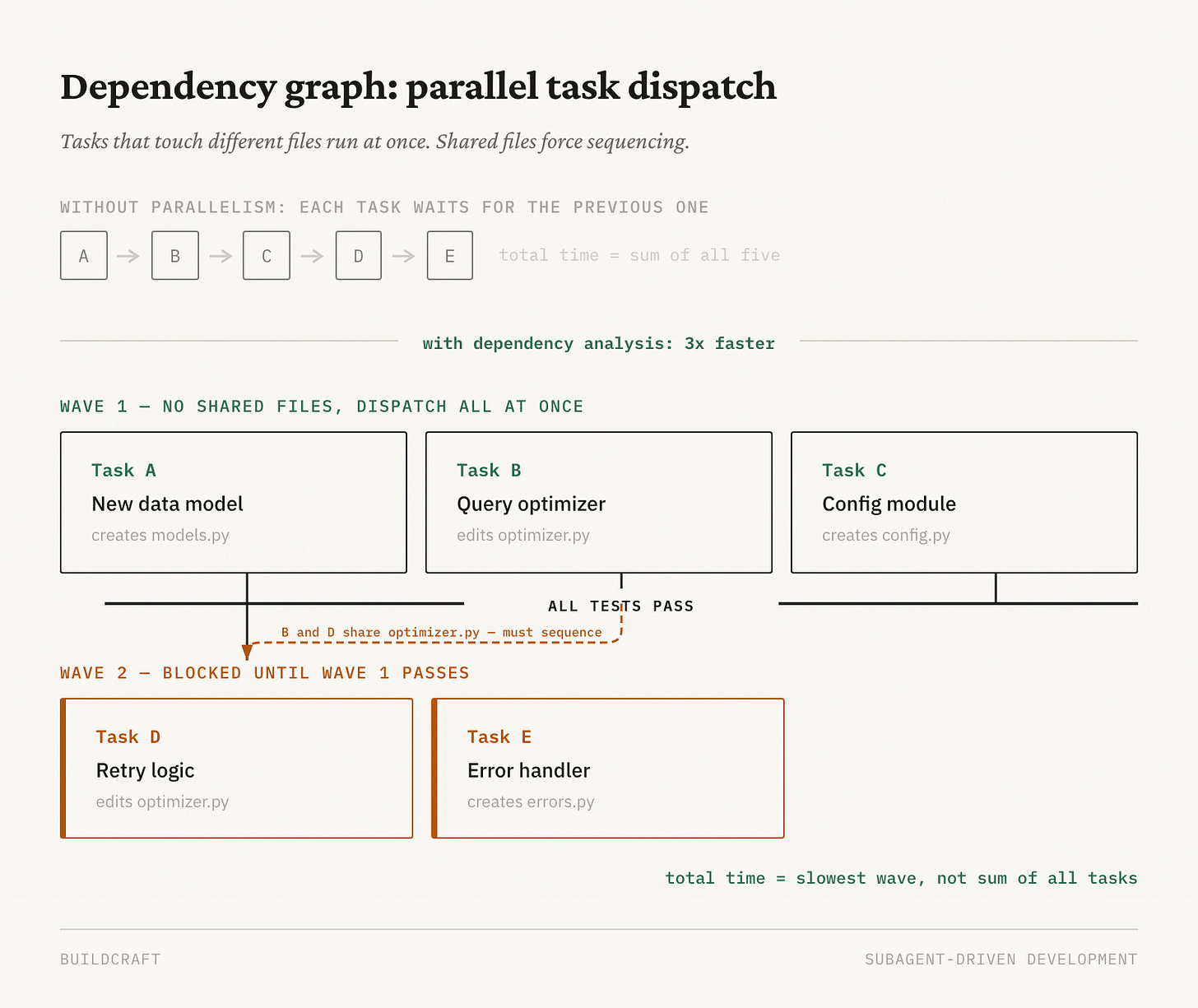

Last month I ran a 17-story implementation across parallel AI agents and compressed roughly 18 hours of sequential work into under 4 hours. Three independent modules that would have taken 14 minutes back-to-back finished in 6 minutes — wall-clock time bounded by the slowest agent, not the sum. A 300-table exploration job went from 20 hours to 4.

Getting there involved a 69-file commit where only 4 files were on-topic, a production 401 that traced back to silently overwritten config edits, and an agent that built an entire feature against an outdated spec. Parallelizing AI agents isn’t hard. Doing it without breaking things is the actual problem.

Each of these failures taught me something about a different layer of the problem. The first is about isolation — keeping agents from stepping on each other’s files. The second is about consistency — making sure agents see the latest state. The third is about verification — not trusting any single agent’s claim that the work is done.

The orchestrator pattern

One coordinator agent manages a task board. Worker agents each implement one story, commit it, run tests, and report back. The coordinator never writes code. It figures out what depends on what, dispatches workers, and won’t start the next wave until the previous wave’s tests are green.

Coordinator

├── Task A: new data model (creates models.py) ──────┐

├── Task B: query optimizer (edits optimizer.py) │── Wave 1: dispatch simultaneously

├── Task C: config module (creates config.py) ────────┘

│

└── Task D: retry logic (edits optimizer.py) ──── Wave 2: blocked until Task B passes

The interesting part isn’t the implementation. It’s the dependency analysis. Before dispatching anything, you build the graph. Tasks that touch independent files with no shared state can run concurrently. Tasks that share files have to wait.

In practice, across a 17-task run, this produced batches like: - Tasks 1 + 2 + 3 simultaneously (all new files, zero overlap) - Tasks 4 + 5 simultaneously (different modules) - Tasks 6 + 7 + 8 simultaneously (pure utilities, no shared state)

The orchestrator’s job at each step is just: “what’s unblocked right now?”

Layer 1: Isolation — keeping agents out of each other’s files

When multiple AI coding sessions share a working directory, git add -A becomes a landmine.

What happened: eval scores started degrading mysteriously. Turned out a recent commit had 69 files in it, but only 4 were relevant. The other 65 were artifacts from other Claude Code sessions running in the same repo. Ideation packages, security reports, test fixtures, completely unrelated stuff.

Same week, different problem: an orchestrator makes two inline config edits, then dispatches a subagent to implement a new endpoint. The subagent rewrites the config file from scratch as part of its task. The inline edits are gone. The merge goes to main. Production returns 401s.

Both problems have the same root cause: agents sharing a workspace without isolation. The fix is git worktrees. Each session gets its own working directory and branch. The git object database is shared (concurrent reads and writes are fine), but file-level changes are completely isolated.

# Each worktree = separate directory + separate branch

.claude/worktrees/

├── feature-auth/ # branch: worktree-feature-auth

├── watchdog-agent/ # branch: worktree-watchdog-agent

└── data-pipeline/ # branch: worktree-data-pipeline

Two additional rules that prevent the remaining edge cases:

First, block broad staging commands with a hook so no agent can accidentally sweep in unrelated files:

{

"hooks": {

"PreToolUse": [{

"matcher": "Bash",

"hooks": [{

"type": "command",

"command": "if echo \"$TOOL_INPUT\" | grep -qE 'git add -A|git add \\.|git add --all'; then echo 'ERROR: Broad staging blocked. Use explicit file paths.'; exit 1; fi"

}]

}]

}

}

Second, commit before dispatch. Any inline changes that a subsequent subagent might touch have to be committed before that subagent runs. Subagents read files from disk, not from your in-session state.

git add backend/middleware/auth.py backend/config.py

git commit -m "chore: pre-dispatch baseline for auth changes"

# Now dispatch the subagent safely

If two worktrees both spin up integration tests, they’ll collide on localhost:8000. Fix that with port offsetting per worktree:

BACKEND_PORT = int(os.environ.get("TEST_PORT_OFFSET", 0)) + 8000

TEST_PORT_OFFSET=1 claude # uses 8001

TEST_PORT_OFFSET=2 claude # uses 8002

Layer 2: Consistency — making sure agents see the latest state

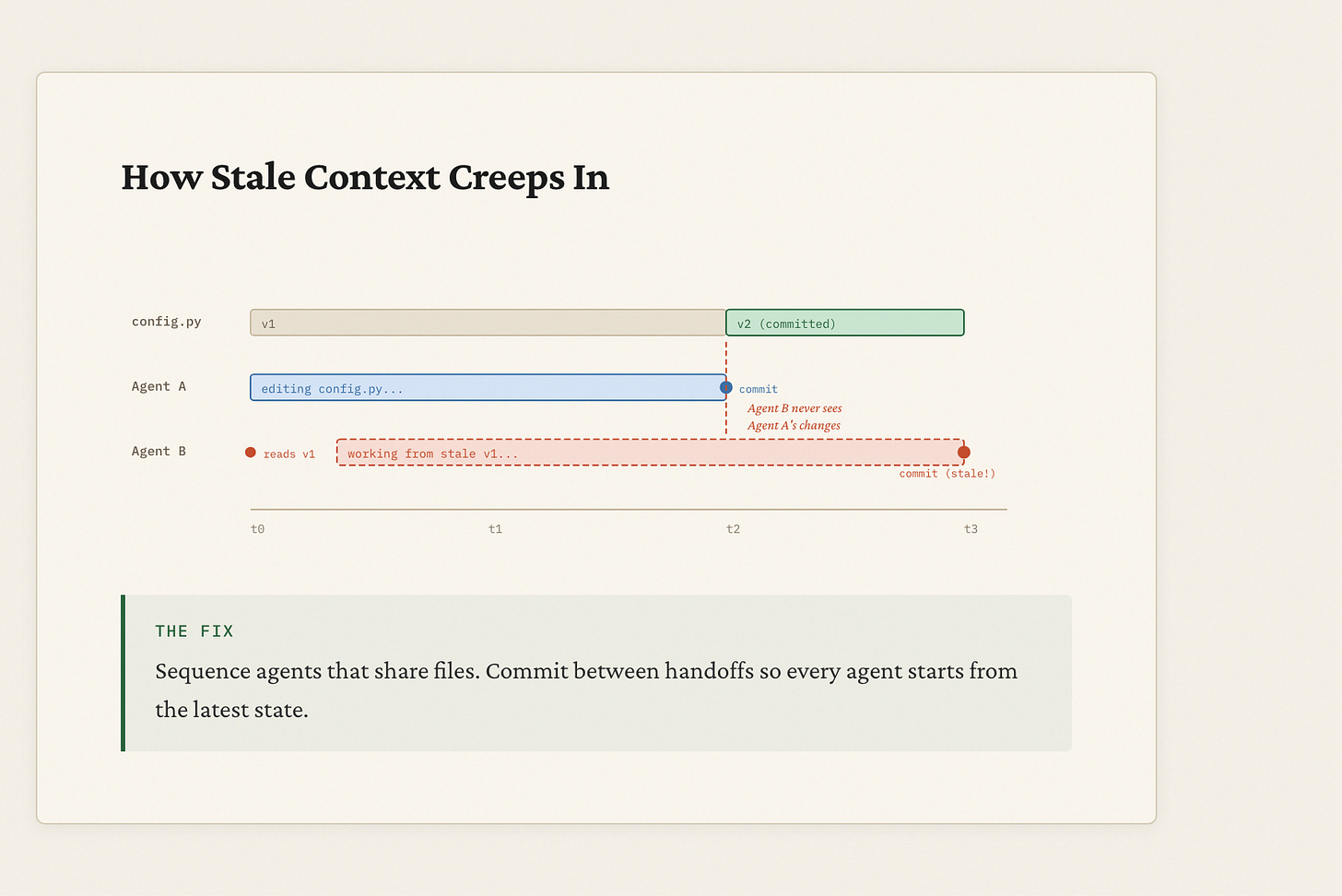

Isolation solves the file-collision problem, but it creates a new one. If Agent A and Agent B are both editing config.py in separate worktrees, they’re fully isolated — but Agent B might be working from an outdated version.

This one is insidious because nothing errors out. Agent B reads config.py at the start of its task. Meanwhile, Agent A is rewriting that same file. Agent B finishes and commits. Agent A finishes and commits on top. There’s no merge conflict because they edited different sections. The code compiles. Tests pass. The logic is wrong.

I hit this when architecture docs drifted from the actual implementation. An agent was building features against the spec, but the spec described parameter names and feature flags that had changed during prototyping. The agent’s work was internally consistent but externally wrong.

The fix comes in two parts:

First, prefer new files over shared edits. The safest parallel tasks create new files rather than editing existing ones. When agents create files, there’s zero chance of reading stale state, and parallel execution is actually safer than sequential.

Second, when agents must share files, sequence them explicitly and commit between each handoff.

Agent A edits config.py → commits

Agent B reads config.py → sees A's changes → proceeds

Not:

Agent A starts editing config.py

Agent B reads config.py ← sees the OLD version

Agent A commits

Agent B commits ← based on stale state, no conflict

The dependency graph from the orchestrator pattern handles this automatically: if two tasks touch the same file, they go in different waves. The gate between waves forces a commit, so the next wave always reads the latest state.

Layer 3: Verification — not trusting any agent’s word

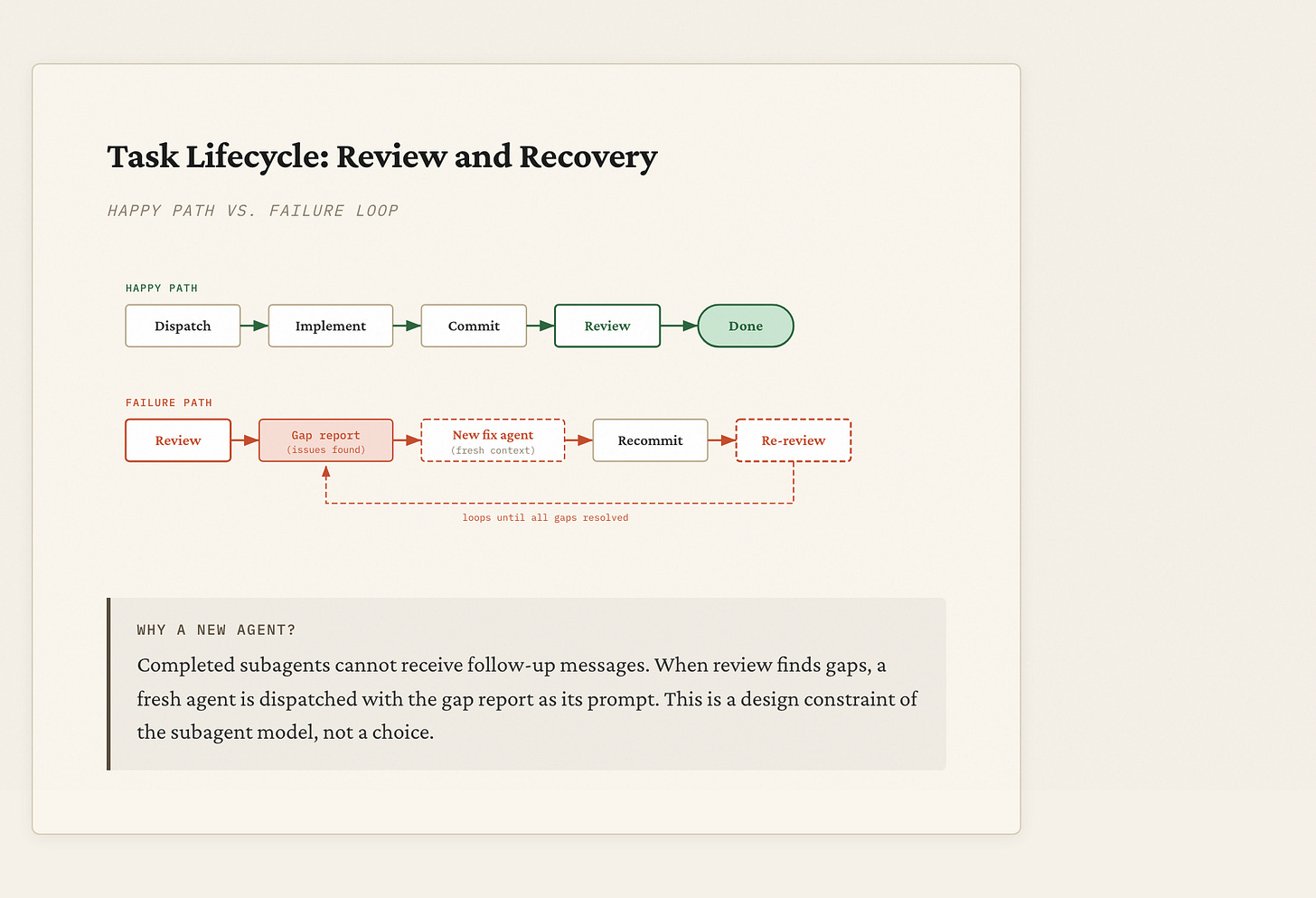

Isolation and consistency prevent agents from corrupting each other’s work. But they don’t prevent a single agent from shipping a bad implementation. That requires a review gate.

Don’t just dispatch implementers. Dispatch a spec review agent after each implementation, before marking the task complete. The reviewer reads the spec and the diff, flags gaps, and feeds findings to a fix agent.

One thing that tripped me up: completed subagents can’t receive follow-up messages. So the fix cycle is spin up a new fix agent with the review findings, not try to resume the original implementer.

Implementer Agent → commits code, reports done

Spec Review Agent → reads spec + diff, outputs gap report

Fix Agent (if needed) → receives gap report, patches and recommits

Orchestrator → marks task complete only after review passes

This caught a missing empty-list test case, an off-by-one in chunk boundary logic, and a config key that was implemented but not exposed in the public interface. All before the stories were marked done.

Without the review gate, these bugs would have shipped silently. The implementer agent would have reported success (it always does), and the orchestrator would have moved on. The review agent is what turns “the code compiles” into “the code is correct.”

That’s the whole framework: isolate with worktrees, keep state consistent with commit-between-handoff discipline, and verify with review agents that don’t trust the implementer’s word. Before dispatching parallel agents, build the dependency graph, block git add -A, commit your baseline, prefer new files over shared edits, and gate every task on review plus tests. The speedup is just parallelism. The hard part is the discipline to do it safely.